Two weeks ago the first phase of the Monash node of the NeCTAR RC (Research Cloud) went live to public (yes, this post is late!). If you’re a RC user you can now select the “monash” cell when launching instances. If you’re not already using the RC then what are you waiting for?! Get to http://www.nectar.org.au/research-cloud and spin up your first server.

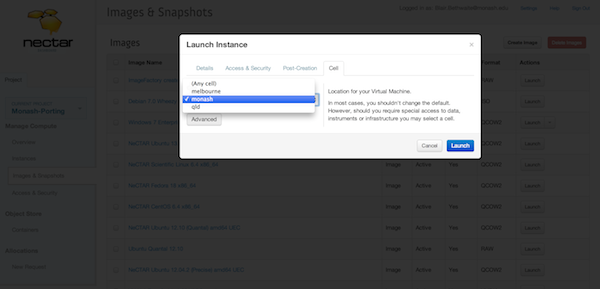

You can launch instances to the Monash node via the Dashboard (see pic), through the OS API by specifying either a scheduler hint “cell=monash”, or via the EC2 API specifying availability-zone=”monash”. Those last two instructions are likely to evolve with future versions of the APIs so stay tuned to http://support.rc.nectar.org.au/support/faq.html (and search for “cells”) for current instructions.

A bit of background about Monash phase 1.

There’s about 2.3k cores available in the current deployment – phase 2 will more than double this. The hypervisors are running Ubuntu 12.04 LTS on AMD6234 CPUs with 4GB RAM per core.

What’s different from the other NeCTAR RC nodes?

The instance associated ephemeral storage is delivered by a local RAID10 on each hypervisor (as opposed to a pool of shared storage, e.g., NFS share) with live-migration possible thanks to KVM’s live block migration feature (anybody interested should note that block migration is replaced with live block streaming in the upstream QEMU code). This means ephemeral storage performance actually scales as you add instances, more or less predictably subject to activity from co-located guests. As OpenStack incorporates features to limit noisy neighbour syndrome we’ll be able to take full advantage of those too.

We pass host CPU information from the host through libvirt to the guests to give best performance possible to code that can make use of it.

Who built it?

Thanks goes to a big team both here at Monash across MeRC (the Monash eResearch Centre) and eSolutions (Monash’s central ICT provider), and also the folks at NeCTAR and the NeCTAR lead node at University of Melbourne. Of course it wouldn’t have been possible without OpenStack and the community of contributors around it, so thanks all!