Back in 2012 our submission to NeCTAR planned R@CMon as being delivered in two phases. First a commodity phase, letting the ideals of en masse computing dominate technical choices. We have been operating phase 1 since May 2013. Our new specialist second phase went live in October! R@Cmon phase 2 (R@CMon RDC cell) scales out high-performing and accelerating hardware as driven by the demands of the precinct. Often ‘big data’ is just not possible without ‘big memory’ to hold the problem space without going to disk (x100 slower). Often ‘more memory’ is the barrier, not ‘more cores’. Often ‘I need to interact with a 3D model’. And so on. R@CMon is truly now a scalable and critical mass of self-service, on-demand computing infrastructure. It is also the play-pit where research leaders can build their own 21st century microscopes.

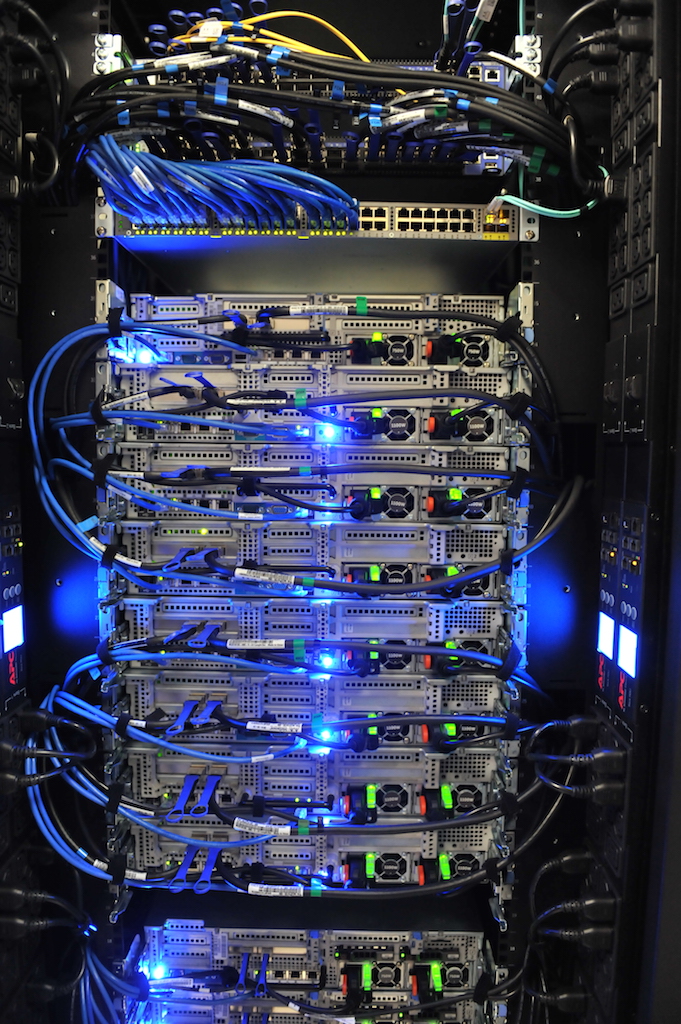

One of the four racks of NeCTAR monash-02. From top to bottom: Mellanox 56G switches, management switch, R820 compute nodes, R720 Ceph storage nodes

In addition to phase 1, phase 2 has –

- 2064 new Intel virtual cores

- 3 nodes with 1TB of RAM

- 10 nodes with GPUs for 3d desktops

- 3 nodes (the large memory ones) with high-performance PCIe SSD

- All standard compute nodes mix SAS & SSD for low-latency local ephemeral storage

- All nodes with RDMA (Remote Direct Memory Access – the stuff that makes fast, large-scale, multi-node HPC jobs possible) capable networking

As with phase 1, the entire infrastructure is orchestrated through OpenStack and presented on the Australian Research Cloud. R@CMon is once again pioneering research cloud infrastructure, virtualising all these specialist resources.

Over the next week we’ll blog with emerging examples of GPUs, SSDs and 1TB memory machines…