Executive Summary

Now after 500 allocated projects (excluding trials) over two years of the RC, we propose an evolution in the NeCTAR Research Cloud (herein “RC”) flavor offerings, to:

- introduce new RC-wide classes of compute and memory optimised flavors tailored more specifically to particular workloads;

- have standard/base flavors more aligned with the commercial cloud alternatives;

- give the RC Nodes greater flexibility in establishing utilisation-improvement strategies.

We believe this approach will provide immediate utilisation gains without memory overcommitment, whilst still providing scope for Nodes to pursue further overcommitment/consolidation as may be appropriate for their workloads and architecture. The remainder of this document details the background and proposes an RC-wide implementation strategy and some example Node deployment scenarios. Skip to Core recommendations below for the proposal.

Overview

As the RC continued to gain popularity and new users over the course of 2013 we got our first taste of what happens once cloud Nodes (capital ‘N’ indicating a zone) get full. The operational Nodes have also had a chance to take stock of the new paradigm and look for opportunities to improve ROI and outcomes for their users. We are now at a juncture where it is appropriate to revisit and refine the basic RC menu – the virtual machine hardware footprints (commonly known as flavors or instance-types) which make up the primary resource consumption patterns of the RC.

With the capacity pressure Nodes have discussed and (some) implemented resource overcommitment (i.e., allocating more virtual machine memory and/or CPU cores than the physical host actually has). This is possible thanks to various kernel and hypervisor technology, and valuable because instances generally do not use all their assigned memory or CPU resources at any one time. Whilst a notional level of overcommitment is likely to be safe and even seems to be quite common in private cloud deployments, the technique is a blunt tool with many caveats including: non-standardised adoption in the RC; general unpopularity amongst the end-user community; and perhaps most significantly, an element of inherent risk due to the fact that unmitigated memory pressure can result in OOM’d instances or crashed systems. A complementary strategy, requiring less overcommitment to achieve the same utilisation, is to fit flavors to workloads. This will (hopefully!) result in greater workload consolidation and empower users to select the best virtual hardware to match their application/s.

The general idea of this proposal is to further develop the RC flavors to provide variety of configuration and QoS. We assume CPU overcommitment for general-purpose/standard flavors, this is a standard practice in virtualised environments and experience to date shows ample headroom for doing so in the RC context. Importantly, we propose to use recent middleware features (cgroups CPU share quotas) to limit CPU contention and guarantee relative CPU QoS for different flavors, whilst also specifying a class of compute flavors with no overcommitment recommended.

Motivations

- High demand and slow capacity ramp up.

- Improve efficiency.

- Current flavors do not offer any variety of CPU-to-RAM ratio and so don’t cater specifically to the many and varied workloads of the RC, this translates to missed opportunities for consolidation and potential for higher utilisation.

- Current flavors do not align with commercial offerings – other on-shore IaaS providers (AWS, Microsoft Azure, Rackspace) have already done the work of determining good typical offerings. We should leverage this and provide standard flavors more comparable to the rest of the market whilst also noting that particular research computing requirements can be met with other flavor classes.

- Operational experience with the existing flavors shows significant opportunity for improvement of hardware utilisation (typically loads of free CPU and plenty of memory even when a host is “full” in terms of vCPUs allocated to it).

- Provide wider scope for HTC workloads to a “use-up” idle CPU capacity.

- The automatically allocated ‘pt-*’ projects have been a very popular and extremely useful tool in on-boarding users, however as a no-barrier free offering they are very generous and there is no incentive for users to release the resources before the project end. The ultimate goal of providing a simple method to test-drive the RC can be achieved in a lighter footprint whilst giving the user more flexibility.

- Since the RC’s inception, new features in successive releases of OpenStack have added capabilities which can help us achieve and manage a diversified offering.

What to do?

Considerations

We propose revamping the RC flavors/instance-types based on:

- The typical nova-compute hardware footprint (1 “core” / 4 GB RAM) and the range of hardware already in the wild and ordered, from 2P x 12″core” AMD to 4P x 16″core” Intel. Related to this, see the box-packing problem in Other considerations below.

- The standard offerings of commercial providers operating on-shore (competitors / partners / burst-locations).

- A desire to cater more directly to a variety of use-cases with differing compute/memory requirements, e.g., web servers/services, data services (presentation, transformation, capture), remote desktops (e.g., training or SaaS), data-mining, high-throughput compute, loosely coupled high-performance compute.

- A desire to improve hardware utilisation allowing a greater volume and variety of workloads to co-exist on the RC. And the assumption that flavor variety in and of itself will translate to improved utilisation without the temptation for aggressive resource overcommitment.

- The assumption that reliability and consistency are intricately linked, and both should trump overall hardware utilisation.

- Given limited resource-quotas users will tend to fit their workloads into the best perceived flavor match, with the incentive that it leaves quota available for them to start new instances.

- Nodes must be able to flexibly define their own specific private flavors.

- Any attempt to standardise CPU and/or IO performance for the various flavors across Nodes is considered out-of-scope due to different hardware and storage architectures already deployed.

- Nodes must have flexibility in how they choose to implement these recommendations based on the specific needs of their local user communities and their operational ability/agility.

Core recommendations

- Creation of three new RC-wide flavor classes: standard (“m2”, following on from “m1”), compute (“c2”), memory (“r2”, r for RAM). The compute and memory flavors are referred to as optimised instances. Class is defined purely as the naming prefix from the user’s perspective (whether they differ with respect to overcommit or resource share in the back-end is the discretion of the Node).

- The compute class is used to calibrate CPU share values (as per https://wiki.openstack.org/wiki/InstanceResourceQuota) in order to maintain an approximate 1-to-1 vCPU to core/thread/module ratio for compute workloads. Flavors with CPU shares less than 2048 represent a fractional share, noting that share values only come into effect with CPU contention, so such flavors can still get all clock cycles when they are available.

- compute and memory flavors are offset +/-33% from the current 1 core / 4GB RAM.

- Adjust standard root ephemeral (vda) size to be more accommodating of larger snapshots and OSes like Windoze. 30GB vda as standard with secondary ephemeral (vdb) adjusted down or removed completely to accommodate.

- Proposed new flavors:

Flavors 2.0

| Class | Name | #vCores | RAM size (GB) | primary/vda disk size (GB) | secondary/vdb (min) disk size (GB) | cpu.shares |

| standard | m2.tiny | 1 | 768(MB) | 5 | N/A | 256 |

| m2.xsmall | 1 | 2 | 10 | N/A | 512 |

| m2.small | 1 | 4 | 30 | N/A | 1024 |

| m1.small | 1 | 4 | 10 | 30 | 1024 |

| m2.medium | 2 | 6 | 30 | N/A | 2048 |

| m1.medium | 2 | 8 | 10 | 60 | 2048 |

| m2.large | 4 | 12 | 30 | 80 | 4096 |

| m1.large | 4 | 16 | 10 | 120 | 4096 |

| m1.xlarge | 8 | 32 | 10 | 240 | 8192 |

| m2.huge | 12 | 48 | 30 | 360 | 12288 |

| m1.xxlarge | 16 | 64 | 10 | 480 | 16384 |

| optimised | c2.1 | 1 | 2.6 | 30 | N/A | 2048 |

| c2.2 | 2 | 5.2 | 30 | N/A | 4096 |

| c2.8 | 8 | 20.8 | 30 | 160 | 16384 |

| c2.16 | 16 | 41.6 | 30 | 320 | 32768 |

| r2.5 | 1 | 5.3 | 30 | N/A | 2048 |

| r2.10 | 2 | 10.6 | 30 | 40 | 4096 |

| r2.21 | 4 | 21.2 | 30 | 160 | 8192 |

| r2.63 | 12 | 63.5 | 30 | 320 | 24576 |

- Standard flavors are available to all, whilst compute and memory flavors will be made available to projects that justify a technical requirement for them. Access to these optimised flavor classes would then be granted in the normal project creation/amendment workflow.

- Refinements to the allocation review process that involve technical appraisal of a project’s need for optimised flavors. This also provides scope for the target Node (if any) to evaluate the request relative to availability of optimised resources.

- pt’s (project trial accounts) have a 4GB RAM & 4 vCPU quota limit. Thus limiting them to the m2.tiny, m2.xsmall, m2.small, m2.medium and m1.small flavors, yet they can now run up to four m2.tiny instances.

- Nodes may choose to overcommit CPU and/or memory resources underpinning these proposed flavor classes, however we do not recommend overcommitment of optimised flavors until operational experience is gained with the new flavor classes. It should be noted that we expect significant utilisation improvements with the new standard flavors even without overcommitment. Additionally, Nodes will need to carefully consider their public IPv4 address capacity.

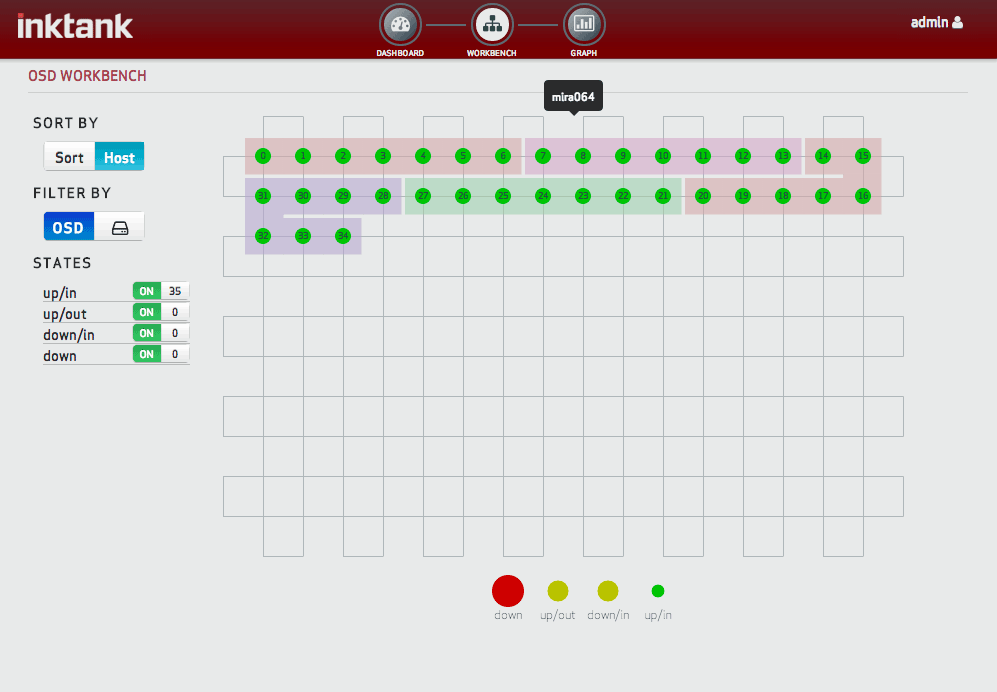

- Nodes use nova host-aggregates for flavor classes and each individual flavor, allowing class-based and fine-grained per flavor control of instance-types that can be scheduled to any particular nova-compute hypervisor/node.

- Nodes overcommitting the standard flavor class use nova per-aggregate core and memory allocation ratio settings (see the relevant blueprint and filter scheduler docs for details). This will allow, e.g., nova-compute nodes in the same Cell to be dedicated to overcommitted standard flavors whilst other nova-compute nodes service optimised flavors. By using host-aggregates in this manner the distribution of compute nodes can be changed dynamically, this is not possible with per-Cell allocation ratios (unless the node is “drained”, removed from production, and then reconfigured).

Other considerations/notes

- The box-packing problem – unbalanced allocation of CPU and memory resources on a hypervisor, i.e., all CPUs allocated but memory available and vice-a-versa (this was one of the primary drivers for a fixed ratio of resources in the “m1” flavors).

- We assume higher CPU utilisation is the priority in terms of consolidation as memory is cheaper and there is always available HTC workload that can utilise spare CPU.

- Because we assume CPU overcommitment for the standard flavors and have introduced additional lower memory footprint flavors, this problem is diminished somewhat in the standard flavors.

- Pathological scheduling with the optimised flavors could result in a hypervisor being entirely filled by “r2” instances, thus resulting in 33% spare CPU cores, however the average case (i.e., assuming even distribution of compute and memory instances) would see no change.

- Specialised capability flavors (e.g., GPU, very high-memory, high-IOPS) should be standardised where possible. A potential example is for GPU flavors, there are only a couple of GPU types likely to be deployed across the RC, these options could be combined with the memory and compute optimised flavor configurations to produce new ‘mg2’ and ‘cg2’ flavor classes.

- Public IPv4 address capacity. One of the obvious issues when considering fitting more instances into the existing hardware footprint is whether we have enough IPv4 addresses to match our hardware capacity, and this is one reason resource overcommitment should be very carefully managed. With the existing flavor menu the average instance is 2.5 cores, so that’s 4 public IPv4 addresses needed for every 10 cores, there’s good reason to believe this will gap will shrink if these recommendations are implemented. (At Monash we’re making provisions to “borrow” another /21 until we can deploy SDN)

- The box-“filling” problem. Particularly with no spaces available for large memory VMs. Possible solution – implement a new scheduler filter that will hard-limit the number of instances of any particular flavor running on a compute node, so as to guarantee a minimum capacity of certain flavors

- Instigate a process whereby the utilisation of compute and memory instances is monitored on a per-project basis, projects determined not to be making effective use of the optimised capabilities may lose access to those flavor classes.

Node Deployment Examples and Trade Offs

These are illustrative scenarios designed to show the possibilities afforded by adoption of these recommendations, many of these scenarios could be mixed and matched within a Node’s deployment in order to achieve the Node’s goals.

- All flavors can run on any compute node. (At a certain aggregate usage level no compute nodes would be able to launch any new larger memory instances, despite there being plenty of aggregate free memory capacity. Can be somewhat mitigated by use of a fill-first scheduling approach).

- Standard flavors separate from optimised (compute and memory) flavors, whilst the latter two coexist on the same hardware. Standard flavors overcommitted, optimised flavors not overcommitted.

- As for #2, standard flavors separate from optimised (compute and memory) flavors. Standard flavors on AMD based compute nodes, compute and memory optimised flavors on Intel based compute nodes.

- Separate compute nodes for, e.g., all flavors with >30GB RAM (independent of flavor class), whilst remaining compute nodes can run any flavor. This provides a means to guarantee a certain capacity of high-memory instances.

- Mix of #3 and #4.

- …

Discussion

None yet – will update with notable comments.

Best Practices for Overcommit

The following is intended to seed a set of living suggestions/guidelines to help Nodes implement resource overcommitment in the safest possible manner, so as not to compromise stability of user services (it must be remembered that positive sentiment is hard to win and easy to lose, people are much more likely to share poor experiences widely than they are to rave about what great infrastructure we’re providing them with).

- If you have local direct-attached storage for your nova-compute ephemeral disks then use a the io_ops scheduler filter to ensure that instance-spawning does not overwhelm your host’s disks. Also set upper disk IOPS and throughput limits on the various flavor classes so that noisy neighbour effects are limited.

- Make sure you have enough swap space (plus a generous amount extra for migrations) on your nova-compute nodes to hold overflow memory pages of instances if they all suddenly started trying to use their full RAM footprint (we really don’t want to see the OOM Killer).

- Make sure your swap device/file is in some way fenced from the rest of your disk IO concerns so that hypervisor-level swapping does not contend with instance IO, e.g., separate swap device or file on the root block devices.

- Use cgroups to reserve memory for the host.

- Monitor and test relevant new Kernel features such as zswap.

ATAQ (Answers To Anticipated Questions)

Q: Why not mix overcommitted and non-overcommitted instances on the same compute node, e.g., have host-aggregates with different ram_allocation_ratios assigned to a single compute node.

A: Whilst we can assign multiple aggregates, the Aggregate*Filter scheduler filters will pick and use the smallest *_allocation_ratio from all relevant aggregates the node is a member of. There’s a good reason for this – it just doesn’t make sense to do anything else. Because the *_allocation_ratio settings are a soft accounting metric, i.e., they only affect the scheduling decision of whether a node can support a new instance, they have no effect on the absolute or relative share of resources each instance has on any particular node. E.g., if the scheduler applied different ram_allocation_ratios on a single node based on the flavor of instances launched, the effective ram_allocation_ratio would end up as something of a weighted average and all instances on the node would be equally affected by ram contention.

Q: What’s with the names / why don’t we copy AWS names?

A: There appears to be no good naming convention to mimic (probably due to English lacking specific and unambiguous words to describe size in various dimensions). AWS have made a hash of their own convention as their flavor offerings have proliferated, it’s now virtually impossible to even guess what kind of instance you’re looking at, e.g., cr1.8xlarge is apparently a memory optimised instance – go figure! Rackspace simply use the memory size as the name, which is not a bad idea but breaks if you want different vCPU counts with the same memory size. Azure uses A1 – A7, which is possibly the single most most intuitive aspect of Azure since it was released (clearly marketing wasn’t involved with that ;-). We’ve made suggestions which continue the tradition of somewhat ambiguous descriptors for the standard flavors but give more precise names to the compute and memory flavors which hopefully speak for themselves. A previous draft used Aussie beer sizes for the standard flavors: pony, small, pot, halfpint, pint, jug, partykeg…

Q: Why the strange/not-round memory sizes for the optimised flavors versus the standard?

A: To account for virtualisation overheads on the host (page tables, block cache, etc.). Take a browse through the AWS and Azure offerings and you’ll see they do this too. The reason for the discrepancy is because we do not expect much if any overcommitment with the optimised flavors, whereas we believe it will be commonplace for conservative overcommitment with the standard flavors (so there’s little reason to adjust for the virt overheads of these).

Parking Lot

Ideas / pipe-dreams go here (mostly requiring dev and ops work)…

- Introduce a spot-instance flavor class and modify the filter scheduler to introduce a retry-style filter that iteratively removes running spot-instance metrics (in batches of some configurable spot-instance scheduling time-slice) from the scheduling meter data until running spot-instances are entirely ignored by the scheduler (thus logically preempted). The final scheduling outcome may then include a linked action to terminate some spot-instance/s on the chosen compute node.

- Extend the existing Nova resource quota feature to set memory-cgroup parameters. Judicious use of the limit_in_bytes and soft_limit_in_bytes controls (particularly the latter) could be used to mix standard and memory optimised instances on the same compute node, e.g., a standard instance launched in a 1.5 ram_allocation_ratio aggregate would have a corresponding memory soft_limit = virtual_ram_size / 1.5, whereas a memory instance in a 1.0 ram_allocation_ratio aggregate would have memory soft_limit = virtual_ram_size.

- Use containers (e.g., lxc) to fence/partition relevant resources on the compute nodes such that overcommit can be implemented consistently amongst classes of flavor. This might mean splitting a physical compute node into multiple compute containers, each acting as a nova-compute node.

- Implement a new scheduler filter that will hard-limit the number of instances of any particular flavor running on a compute node – a possible work-around for the box-packing problem.